Insight

Computer Vision and AI in Agriculture and Agritech (2022 Use Case Overview)

Caroline Lasorsa

Product Marketing | 2022/07/15 | 8 min read

Introduction

Since the dawn of civilization, farming has been the cornerstone of food production. Historically speaking, much of our society revolved around crop production, instilling practices such as Daylight Savings and creating summer vacations so that children can help with the harvest. As a significant facet of our communities, food has both brought people together and fueled conflict. However, one undeniable thing is that agriculture requires years of practice, backbreaking labor, and increasing demand. The small town farms of our ancestors can do little to feed the masses of today. So as we’ve moved toward a more fast-paced society revolving around technology, so has our food production. To keep up with the demand, computer vision applications have made their way into farming, streamlining everything from harvesting to plant recognition to crop diagnostics.

Computer Vision: What Is It?

For robots to complete simple tasks like fetching items off a shelf or mopping up a spill, they need to incorporate computer vision. Think of it as a subset of artificial intelligence that interprets visual data to automate specific tasks. A computer vision model must learn to predict various patterns and understand an object’s location, speed, and movement through a process known as deep learning. Setting up a computer vision model involves hundreds and sometimes thousands of images for your model to learn, but once it’s been built, you can improve the speed, accuracy, and output of your business initiative.

Computer vision has become an increasingly prevalent practice across various industries. You see it in self-checkout machines, food delivery robots, and autonomous vehicles. While computer vision increases throughput and efficiency in practice, getting there involves thousands of carefully labeled images for training purposes. Creating an effective model requires data services teams, data labeling software, and the time to build it out. While some models only need a simple bounding box around images, others are more complex.

Computer Vision in Agriculture

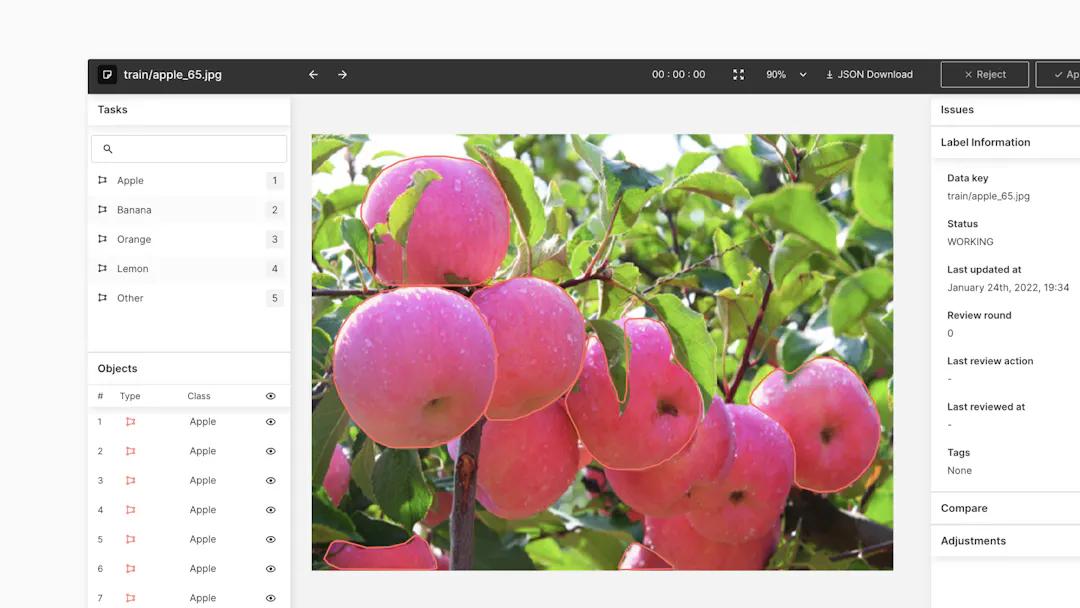

Example of how to apply computer vision for agriculture businesses

For agricultural practices to incorporate computer vision and do it well, your model has to understand the nuances of farming. This entails understanding crop freshness, deciphering diseases, and knowing the ins and outs of soil health, among other important things. In essence, a successful computer vision model in agriculture must understand the same practices that humans have been perfecting for thousands of years. So how exactly does a machine emulate the intricacies of harvesting? It all comes down to data labeling.

The Importance of Data Labeling

Building a computer vision model that can take on monotonous farming tasks involves feeding ML models familiar images and teaching them to recognize those objects. Within each piece of data, one must call out, or annotate, the objects most important to your model. If your model is learning to pick oranges, for example, it must be able to recognize an orange. It sounds simple enough, but there’s a lot more to it than simply knowing that an orange is an orange; it must understand if it’s fresh enough for picking, if it’s rotten, and where it is located, i.e., on the ground or in a tree, among potentially many other factors.

Farming with Robots

Apply computer vision for agriculture business

In agriculture, utilizing computer vision often means building a robot that can successfully pick and harvest crops. These machines are well-equipped with object recognition capabilities and can latch onto each crop without dropping it. What used to be laborious is now autonomous.

The Benefits:

Utilizing computer vision in agriculture improves the overall harvesting process. Adding autonomous machines into the mix minimizes the need for manual labor while simultaneously speeding up the workflow. Furthermore, faster speeds reduce waste, as less fruit is left behind to rot. The aforementioned benefits have an overall effect on costs, making computer vision a smart decision for agricultural outfits.

The Challenges:

While automating one of the world’s most important industries has its upsides, putting it into practice is easier said than done. In the world of computer vision, crop recognition is one of the most difficult exercises in which to train a model, partly because the environment is less than consistent. Having your model work outside means that changes in weather and lighting affect how it perceives and recognizes the crop and its surroundings. What’s more, the appearance of your crop is going to vary individually, so your model must be highly trained to decipher all shapes and sizes.

Plant Identification

Another basic use of AI and computer vision in agriculture is plant identification. Most commonly used among everyday gardeners through smartphone apps, plant identification is mostly thought of as a fun contribution to an everyday hobby. But it has a more important purpose: seeking out invasive species.

The Good:

Prior to plant identification AI, it was up to scientists to bear the responsibility of discovering and eradicating invasive species. Now with smartphones, you or I could report particularly dangerous vegetation in areas that may not be on a scientist’s radar. By doing so, scientists and researchers are granted access to even more data, giving them a clearer picture of a particular ecosystem. This enhances their own knowledge of a particular species and assists in eradicating it from the area.

The Drawbacks:

Computer vision in plant identification works best in robust models used in a professional setting. Smartphone applications engineered to work with everyday people are not quite as accurate. That said, developers are continuously improving their recognition software to deliver more precise results. In the field, however, machine learning models trained specifically for the purpose of identifying plants yield much better results.

Crop Diagnostics

Historically speaking, a failing crop can have devastating effects on a region or a nation. The Irish Potato Famine, for example, was responsible for approximately one million deaths, and led to a million more fleeing the country. As a nation, they relied heavily on the potato crop, but when a deadly fungus infected it, it took years to eradicate. From 1845 - 1852, Ireland’s main food source was essentially non-existent.

Nowadays, the global economy offers food sources from all over the world, but even if it didn’t, advanced technology has offered diagnostic tools to recognize infections and prevent them from spreading. Using computer vision, it’s possible to create a model that can recognize the first signs of plant sickness and thus prevent any major effects on the harvest. Here’s how:

Plant diagnostic technology utilizes deep learning to create a computer vision model that can, with training, recognize plant abnormalities. To do so successfully, your model must be fed thousands of images to identify the plant, understand its ripeness, measure its size, and recognize all of its components, including the stem and the leaves. As part of the training process, your model must also be able to decipher abnormalities and report feedback to those overseeing it.

The Good:

Implementing computer vision into crop diagnostics has the obvious benefit of preventing a poor harvest. Catching a plant’s sickness before it proliferates can save a business from a year of disaster and lost profits. What’s more, in developing countries where food scarcity is a much greater risk, crop diagnostics tools can mean the difference between a plentiful harvest and famine. In such cases, smartphones with computer vision and plant detection technology are being sent to underdeveloped countries and saving harvests, and in turn, lives.

The Challenges:

Creating a model requires a team of machine learning experts and data labelers to annotate hundreds to thousands of images with precision and accuracy before being fed to a model. In agriculture especially, images are exceptionally complex. Each individual image includes extraneous information like leaves and branches, that your model must sift through. So in order to successfully deliver results, the images in an agricultural computer vision model must be carefully annotated through segmentation, meaning that its shape is outlined at the pixel level. This is in part why smartphone applications aren’t always as accurate, however they are still an invaluable tool for smaller outfits and in developing countries.

Beyond Plants: Aquaculture

Computer vision applications for agriculture and aquaculture practices.

Aquaculture and fish farming present an easier alternative to deep sea fishing, and more recently, computer vision has helped monitor fish health, study feeding patterns, and keep out dangerous species such as sea lice. In many instances, fisheries are made up of thousands of fish, meaning that monitoring every single one of them requires a large team. It becomes difficult to identify diseases within the tank, and even more so pinpointing which fish are suffering the most. Treatment then, requires excessive handling of each animal, and is an overall invasive process.

To combat the challenges in aquatic farming, computer vision technology can be trained to identify aquatic wildlife, meaning that it automatically processes images of marine wildlife and can learn to make assessments based on that data.

The Benefits:

Integrating computer vision into aquaculture helps scientists understand the various patterns exhibited by the fish. If, for example, the data shows evidence of illness throughout the farm, scientists can tend to the fish in question and have a more targeted approach in doing so; technology has advanced far enough so that computer vision models can distinguish individual fish.

In addition to monitoring the animals’ health, computer vision helps us move toward a more sustainable and environmental approach to sourcing food. Neural networks have been designed to learn feeding patterns and ensure that fish are not being overfed as well as learn their behavioral patterns and changes, thus reducing food waste. Having a better understanding of the appropriate actions needed to care for the fish influences the decisions and operational executions put forth by the farmers. In addition, aquaculture technology reduces the need for round-the-clock monitoring and encourages a better work-life balance among farm employees, and the act of raising the fish cuts back on deep sea fisheries disrupting the marine ecosystem.

The Drawbacks:

Computer vision for marine farming is extremely complicated. Fish are in constant motion, so building reliable computer vision models is difficult, requires a ton of data, and it’s expensive. In addition, image quality underwater is murky, so fish identification is harder than in a well-lit, controlled environment. Another challenge of using computer vision and machine learning to identify a living species is that it grows and changes. No animal stays the same size from birth, so identifying each fish becomes more difficult.

Animal Health Monitoring

Apply computer vision for agriculture solution.

In addition to fisheries, computer vision and machine learning are an increasingly integral part in the health monitoring of farm animals. Using artificial intelligence and video footage, we are able to detect health problems faster and more effectively than by human monitoring alone. Understanding the prevalence of disease helps stop the spread among livestock, leading to healthier and happier animals and better farming techniques.

The Upside

Infectious diseases are a serious threat to the agricultural industry, potentially diminishing food sources for entire communities. The economic impact that comes from a disease outbreak can have negative effects on businesses while the animals themselves suffer. Machine learning can be taught to understand behavior, feeding patterns, and other movements that may indicate a problem. For example, if a pig is moving more slowly than usual, it could be injured. If the pigs in a pen are too close together during feeding times, they may be stressed and the farmers should separate some of them. Sluggish movements might also translate into illness, and recognizing this can lead to earlier treatment. Having machine learning at the helm of modern farm solutions leads to positive economic outcomes for the farmers and better living conditions for the animals.

The Challenges

Presenting AI solutions to the farming industry to detect behavioral patterns and foresee illness is less accessible to smaller outfits due to the cost and data requirements; it is much more commonly used in industrial farms than independent ones. Using AI across major farms or slaughterhouses presents an overall view of health; if there is an illness outbreak within one farm, it can perpetuate bias in regards to the welfare of the animals and quality of meat or dairy. In addition, there is a gap between data scientists who work with computer vision and machine learning and the veterinarians that care for the animals, as AI is not commonly taught in veterinary school.

Key Takeaways

The use of AI in agriculture is an increasing trend that poses more benefits than it does risks. The technology developed to better monitor animal behavior, help diagnose illnesses, and understand feeding habits have an economic advantage as well as an environmental one. In crop harvesting and plant studies, AI has similar advantages. With simple tools built for our smartphones, computer vision has given underprivileged farming communities the ability to identify harmful plant diseases and prevent devastating effects on food supply. And as the technology advances, biologists, farmers, and botanists are able to tend to their crops and livestock remotely. Having this option limits the need for excessive labor while assisting in the identification of behavioral or environmental changes. Tending to livestock and harvests with the aid of machine learning enhances the skills that specialists already have and helps meet the increasing demand of our global economy.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.