Insight

How to Select Better ConvNet Architectures for Image Classification Tasks

Dasha Gurova

Community Manager | 2022/06/07 | 8 min read

Introduction

Successfully applying deep learning techniques requires more than just a good knowledge of what algorithms exist and the principles that explain how they work. Machine learning practitioners also need to know how to choose an algorithm for a particular application.

There's no go-to formula for any ML project. A huge part of improving machine learning systems is experimenting until you find a solution that works well for your use case. However, an overwhelming amount of tools and model architectures are available for computer vision tasks. This article provides you with a strategy to select a suitable architecture for your image classification project.

Image Classification Task Overview

Image Classification is a task that attempts to comprehend an entire image as a whole. The goal is to classify the image by assigning it to a specific label. Image classification can be considered a fundamental problem because it forms the basis for other computer vision tasks like object localization, detection, segmentation, etc.

The ImageNet Challenge is the (unofficial) benchmark standard for most computer vision algorithms when it comes to image classification. It is an annual competition that uses subsets from a large dataset of annotated photographs comprising over 1 million images across 1,000 object classes, AKA ImageNet. Historically, machine learning on images had been a challenging task until 2012, when an extensive deep convolutional neural network called AlexNet showed excellent performance on the ImageNet Challenge and caught everyone’s attention. Ever since, convolutional neural networks, or ConvNets, have evolved very fast and surpassed human-level performance on visual tasks.

Fast forward to 2022, and Vision Transformer or ViT models are currently topping the charts. Transformer architecture, initially proposed for natural language processing, adopts the self-attention mechanism - having the model focus on some part of the input text sequence when predicting each word. The Vision Transformer model adapts the attention idea to work on images, where the equivalent of words are patches that the input image is broken into. The concept of applying Transformer architecture to images is very interesting and potentially the source of breakthroughs. At the time of writing this blog, ViT models do scale well with datasets but are still problematic in small-scale datasets. We don’t understand them fully yet and are just starting to put ViT models into practice.

ConvNets, on the other hand, were put into practice for more than a decade and have become very effective and popular due to the democratization of data, computational power, and abundance of tools and frameworks that followed. And since the goal of this article is to help practitioners to choose the model architecture for real-world computer vision problems, supervised image classification using ConvNets is what we will focus on.

Selecting a ConvNet Architecture

There are plenty of ConvNet architectures for image classification that you can employ for real-world computer vision projects. However, choosing which model architecture to use is not always an exact science. But we should always start any machine learning project by setting up goals and expectations.

Determine Desired Performance

How can one determine a reasonable level of performance to expect for your specific use case? Model accuracy and inference speed both matter to ML engineers:

• Accuracy - We’d like to use a model that gives us the highest accuracy, which means a better experience for end-users.

• Speed of model’s training and inference - We’d want the model to train and generate predictions as fast as possible because, in production, we might have to serve thousands of users and need to retrain the model quickly if any problems occur.

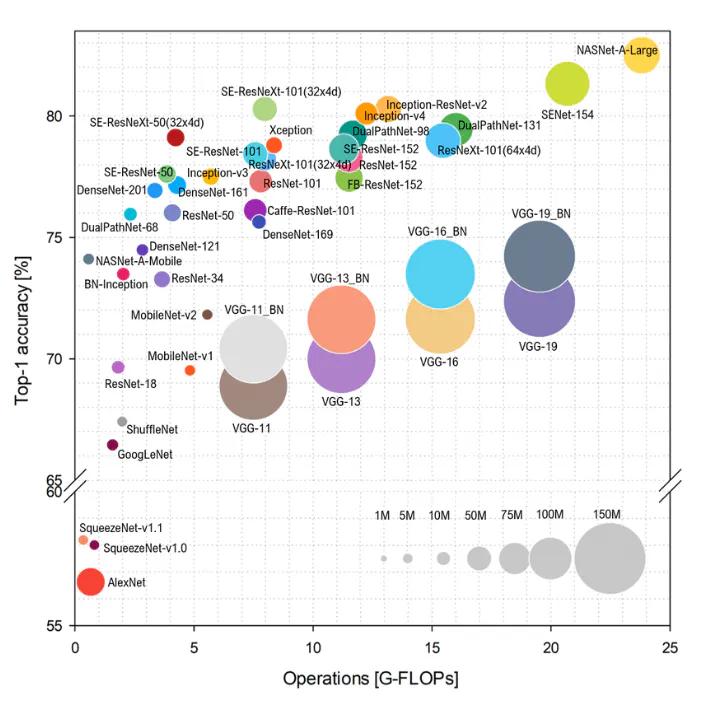

Generally, we need to use a deeper (larger) model to get higher accuracy. But with a larger model comes a larger number of parameters that make it slower to execute. To visualize the accuracy/complexity tradeoff among common SOTA architectures in computer vision, you could refer to the Benchmark Analysis of Representative Deep Neural Network Architectures paper (published in 2018) that features a very useful chart.

Benchmark Analysis of Representative Deep Neural Network Architectures

Source: Benchmark Analysis of Representative Deep Neural Network Architectures

For most deep learning deployments in the industry, we should consider the accuracy level that is necessary for an application to be safe, computationally affordable, and appealing to users. For example, suppose you know that the end goal of your project is to deploy the model on edge devices. In that case, complexity is a more critical concern, and you might have to sacrifice accuracy a bit (MobilNet architecture is a good choice here).

On the other hand, if training cost and prediction latency are not a concern for your project, and you are after the highest accuracy, you might want to choose the largest/fanciest model or even consider an ensemble of several model architectures.

Once you have an idea of your realistic desired performance, your architecture search decisions will be guided by this goal. In the next section, let’s zoom in on some of the most commonly used SOTA ConvNet architectures.

Popular ConvNet Architectures

This section will briefly look at various ConvNet architectures widely used nowadays, i.e., their evolution, advantages, and disadvantages.

VGG Family

To improve upon AlexNet, researchers started to increase the depth or number of convolutional layers. Adding more layers resulted in better classification accuracy. VGG architecture (proposed in 2014) had a deeper network structure and improved accuracy but also doubled its size and increased runtimes compared to AlexNet. It has 16 convolutional layers (and 19 in the later iteration) and uses 3x3 convolutions exclusively without losing accuracy.

Why choose VGG architecture? Its simple architecture makes it relatively easy to understand and implement. It performs well on common image classification scenarios. VGG architectures have also been found to learn hierarchical elements of images like texture and content, making them popular choices for training style transfer models.

Inception

The Inception network came to the world in 2014. The main hallmark of this architecture is building a convolutional neural network while improving the utilization of computing resources. When lining up the convolutional and pooling layers in a network, the designer has multiple choices, and the best decision is not obvious. Instead of deciding beforehand which sequence of layers is the most effective, the Inception module provides several alternatives that the network can choose from based on data and training.

Why choose Inception architecture? It’s a deeper CNN than VGG, with fewer parameters and significantly higher accuracy. This results in a shorter training time and smaller size of the trained model. On the other hand, this architecture can be pretty complex to implement from scratch.

ResNet

ResNet or Residual Neural Network, introduced in 2015, continued the trend of increasing the depth of the models. One downside of adding too many layers is that doing so makes the network more prone to overfitting the training data due to the vanishing gradient problem. To solve this, ResNet introduced a novel residual module architecture with skip connections that allow information to flow from earlier layers in the network to later layers, creating an alternative shortcut path for the gradient to flow through. This fundamental breakthrough allowed for the training of extremely deep neural networks with hundreds of layers. The most common variant of ResNet is ResNet50, containing 50 layers, but larger variants can have over 100 layers.

Why choose ResNet architecture? ResNet is widely used as it performs really well on classification tasks specifically. On top of that, it uses global average pooling, which accelerates the training (compared to the previous architectures like VGG). If you want something tried and true, this is a good choice.

Xception

The Xception model was proposed by Francois Chollet (creator of Keras) in 2017. This model is an extension of Inception architecture that replaces the inception model with depthwise separable convolutions and ResNet-style skip connections.

Why choose Xception architecture? Xception offers a combination of both ResNet and Inception architectural features in a simpler design. This makes architecture easy to define and modify. Linear stack layers make training faster than Inception, and it slightly outperforms Inception in terms of accuracy on ImageNet.

MobileNet

The MobileNet architecture was developed by Google to find a neural network suitable for edge devices such as smartphones or tablets. MobileNet architectures introduced two important innovations: depthwise separable convolutions and a width multiplier (hyperparameter). Depthwise, separable convolutions replaced regular convolution layers with fewer parameters and are more computationally efficient. The width multiplier is a parameter that controls how many parameters are used for each convolution layer. This allows for the creation of several networks along a tradeoff curve of size and speed versus accuracy.

Why choose MobileNet architecture? An array of models can be created with this architecture so that more powerful devices can receive larger, more accurate models. In contrast, less powerful devices can use smaller, less accurate models. If you need a model that you can deploy on edge devices with scarce computational resources, MobileNet is the way to go.

EfficientNet

A later iteration of MobileNetV2 by the same team is called EfficientNet. Inverted residual bottlenecks are again the basic building blocks, but the optimization goal was tweaked towards prediction accuracy rather than mobile inference latency. Convolutional architectures have three main ways of scaling: use more layers, use more channels in each layer or use higher-resolution input images. The EfficientNet paper points out that these scaling axes are not independent, and this family of networks is scaled along three scaling axes rather than just one.

Why choose EfficientNet architecture? This architecture is a workhorse of many applied ML teams because it offers optimal performance levels for every weight count. If you don’t have size/speed restrictions (inference on auto-scaling cloud system) and want the best/fanciest model, consider EfficientNet.

Neural Architecture Search

For much of the last decade, new state-of-the-art results were achieved with new network architecture manually created by researchers. As with many tasks that rely on human intuition and experimentation, someone eventually asked if a machine could do it better. Neural architecture search (NAS) automates the process of neural network design. Given a goal (e.g., model accuracy) and constraints (network size or inference latency), these methods rearrange composable blocks of layers to form new architectures. Though NAS has found new architectures that outperform their human-designed peers, the process is incredibly computationally expensive.

Why choose NAS architecture? Besides being computationally expensive, the network architectures discovered by NAS techniques typically don’t look like those designed by humans. We don’t know why they work well, so we cannot move some ideas to other architectures. Nevertheless, NAS achieves high accuracy, and, in some cases, that’s enough.

Tips to Get Started

Start simple

We talked about a few ConvNet architectures that you can employ for your image classification project. It is easy to get carried away and jump straight into fine-tuning sophisticated models with all the fancy tools and techniques available nowadays. But if there is one piece of advice we hear the most from practitioners, it is to always start with the simplest, appropriate model or a baseline model.

Why do you need a baseline model?

A benchmark is needed to assess a model regardless of the scenario properly. A simple baseline model can serve as a benchmark, enabling a more informative evaluation of other model architectures you experiment with.

Your baseline model can also help indicate if your data is insufficient or inadequate for the task in question. For example, if a classification model fails to predict certain classes, you should place effort into addressing this flaw. Imagine going through the hassle of building a complex model only to learn that it is trained with faulty data. After you've established a baseline model, you can incrementally improve the performance by tuning hyperparameters, working with the data, and trying other architectures based on the findings from the simple model.

Choose a model that you can understand well

Don't obsess about taking the algorithm that was just published at some conference last week; instead, pick a model that you can understand well, especially if your goal is to deploy this model in production. A model architecture that you understand will be easier to debug, tweak, identify improvement points, and iterate. For practical image classification applications, a reasonable ConvNet architecture with good data will often do just fine and will, in fact, outperform a great algorithm with not-so-good data. A good start would be choosing a simple ConvNet architecture (for instance, VGG with fewer layers) that performs well on ImageNet and trying it with your data.

Choose a model that you can quickly implement

Your first model will likely not be your last. Choose a good, open-source implementation and use that to get going quickly. Or even better, employ transfer learning to train the chosen architecture on your data quickly. Transfer learning is a valuable technique that allows you to leverage pre-trained weights of the most popular architectures. High-level deep learning frameworks like Keras and Pytorch have made it incredibly easy to use transfer learning, and it often takes only a few lines of code to run or train state-of-the-art architectures on your data. Transfer learning is a topic in itself, but it is out of the scope of this article. You can learn more about it here.

Create your own benchmark

Of course, there might be multiple model architectures that can achieve desirable performance for your project. After you establish a baseline with the most straightforward, appropriate model, you can explore your options further by experimenting with a few architectures and picking the best one that meets your project’s performance goal. Use open-source tools and transfer learning to quickly implement and train other candidate architectures to see if they can outperform your baseline model or offer complexity improvements.

Conclusion

We discussed the most well-known and commonly used convolutional neural network architectures that could be solid choices for your computer vision project. We explored how to select the best architecture, taking into account the goals and resources of your project. Computer vision is a continuously growing domain, and there is always a new model to look forward to and push the boundaries further. In most real-world applications, though, you would want to avoid large and complex models and select a model that is computationally affordable for your project and provides appropriate accuracy.

Regardless of the ConvNet architecture you choose for your image classification tasks, Superb AI is always here and ready to help on both the tooling and support side. Use our labeling suite to quickly and easily classify your images and videos, create a custom auto-label to do the work for you, or work with our managed services which can expertly handle these tasks for you from start to finish. Not sure where to get started? Reach out to our team, and we can put you on the right track.

Related Posts

Insight

CVPR 2025 Foundation Few-Shot Object Detection Challenge: Transforming Future Industries with AI

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

How Can Vision AI Recognize What It Has Never Seen Before? LVIS and the Future of Object Detection

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

Interactive AI in the Field? Exploring Multi-Prompt Technology

Tyler McKean

Head of Customer Success | 15 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.