Product

New Release: Reviewer Role for More Effective and Structured QA Processes

2022/10/20 | 2 min read

What’s New?

Building an effective model requires more than just uploading your data and flipping a switch, as any machine learning practitioner knows. Organizing your team, establishing ground truth, implementing automation, and creating an effective QA workflow is also crucial. Furthermore, establishing ground truth and automating QA is only the tip of the iceberg.

Our goal at Superb AI is to make labeling data easier for you, which will consequently enhance your models. We've been working hard to improve data quality assurance pipelines by introducing the Reviewer function. With this in mind, we've developed a way to make your team's auditing workflow smoother. The Reviewer function allows you to structure access points for data label review with wide and well-defined permissions in order to maintain data security.

Why introduce the Reviewer role?

Properly auditing your data workflow is comparable to raising a vegetable garden. Without the effort to keep your plants alive, planting seeds will not yield the results you desire.

A proper QA pipeline is just as crucial when building a successful computer vision model. Your autonomous vehicles may run into trouble, illnesses may go undetected, and your business venture may fail if you don't have one.

We understand the importance of streamlining and sharpening your workflow, and much like our imaginary vegetable garden, we provide the best tools to do so. By formally defining auditing responsibilities through the Reviewer role within your team hierarchy, your workflow will become more cohesive.

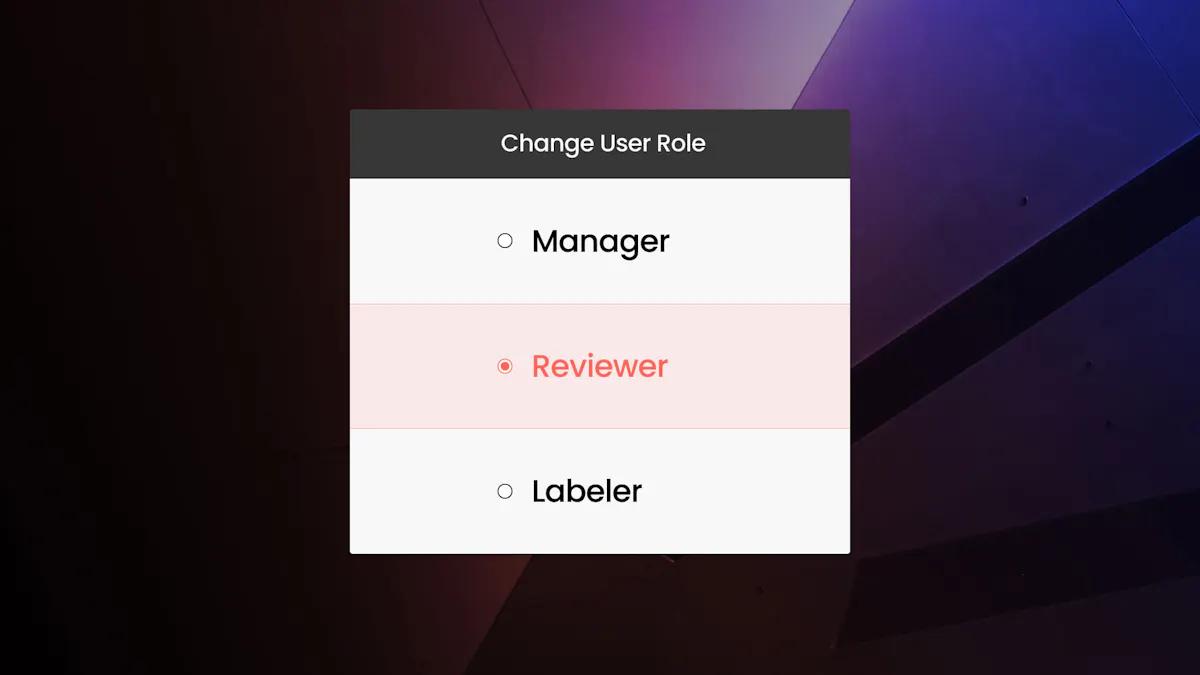

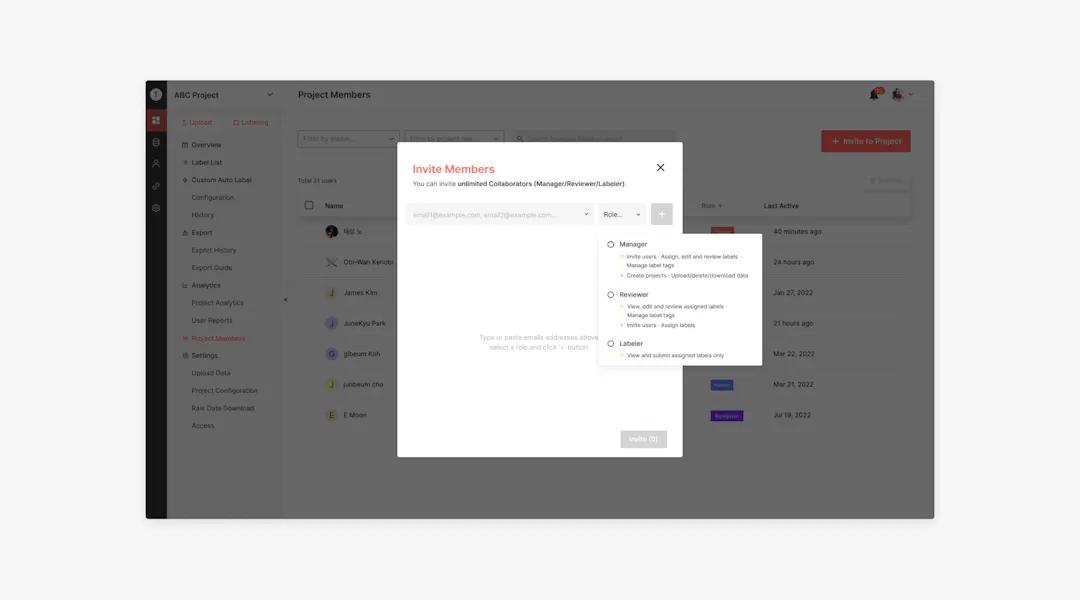

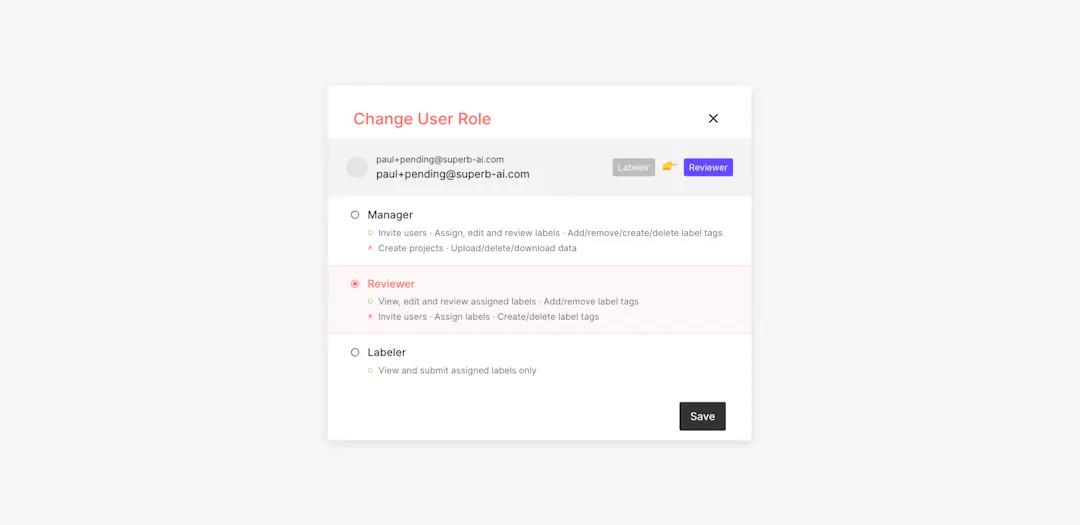

What tasks can reviewers perform?

Reviewers can perform a variety of different tasks. They may view, edit, and review labels they have been requested to examine (i.e., essentially assigned to examine). They may also add and remove tags from labels. In contrast to managers, they cannot perform project management functions like inviting members, assigning labels, and deleting or creating new tags.

How else is this different from the manager role?

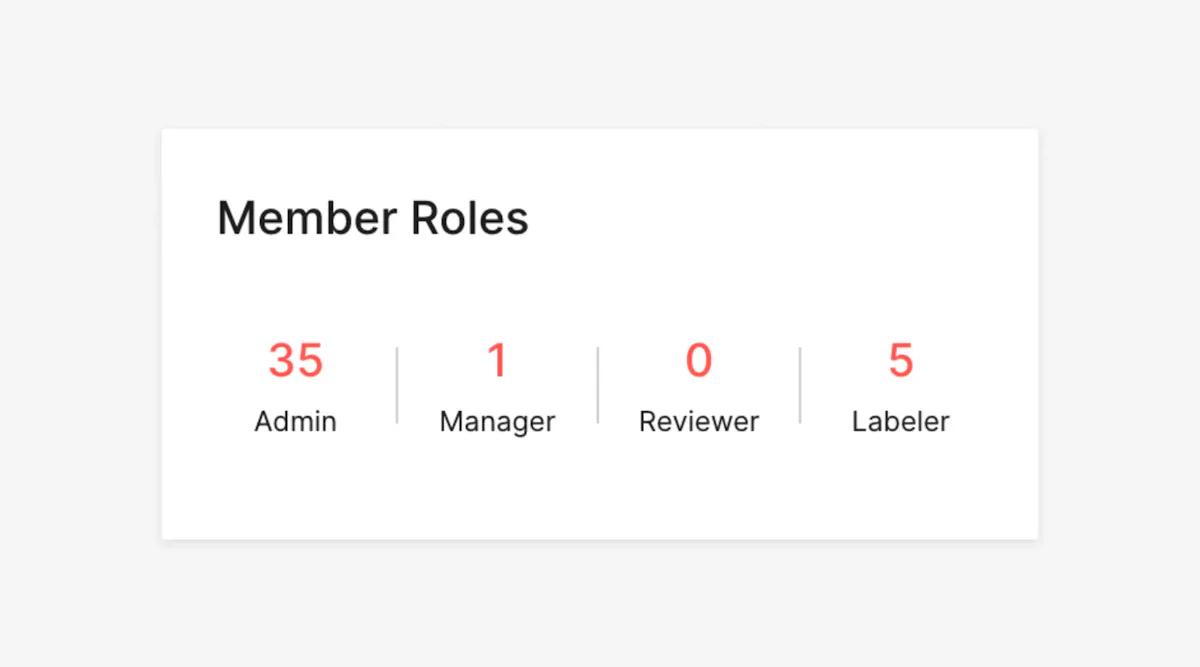

When it comes to Superb AI's current structure, auditing is performed by the Manager roles and permissions. Although this approach has allowed teams like yours to fully access labels for quality control, managers are not the only ones with the right qualifications. Introducing a formal role allows your team to maintain more private proprietary data.

As a team leader, you don’t have to worry about too much access to your project, nor should you feel the need to burden upper management with auditing. Keeping the roles well-defined helps you organize your team while simultaneously protecting your data.

What this means for your team

Assigning Reviewers from the get-go takes the guesswork out of creating your auditing workflow later on, helping your team meet their defined goals and bolster efficiencies. As part of this new role, tenants can assign reviewing tasks, meaning that managers have better visibility into each person’s workload and can make adjustments along the way.

Like other roles within the Superb AI Suite, Reviewers can be invited as a project or team member. Additionally, many Reviewers are also responsible for labeling and can seamlessly switch between their roles.

In addition, we've added a new reviewer tab to our user report functionality to provide additional depth and context to your project tracking. This includes metrics such as reviewed label and frame counts, total approvals and rejections made by the reviewer, and more.

Get Started

Superb AI’s labeling platform is well-equipped to boost your data annotation and auditing workflows through current and new additions like the Reviewer role. By carving out a specific role, project managers can have greater confidence in their data’s security and more visibility into their team’s progress.

Curious about Superb AI? Our platform handles diverse datasets and supports teams from small startups to giant enterprises. Sign up for a free trial, or view our pricing page to find the best plan for your machine learning project.

Related Posts

Product

How to Build & Deploy an Industrial Defect Detection Model for a Lucid Vision Labs Camera

Sam Mardirosian

Solutions Engineer | 15 min read

Product

How to Build & Deploy a Safety & Security Monitoring AI Model for an RTSP CCTV Camera

Sam Mardirosian

Solutions Engineer | 15 min read

Product

How to Use Generative AI to Properly and Effectively Augment Datasets for Better Model Performance

Tyler McKean

Head of Customer Success | 10 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.