Product

Product Updates: March 2022 Roundup

2022/03/31 | 4 min read

Introduction

One of the greatest challenges machine learning teams face today is the sheer number of time-consuming steps between gathering data for your computer vision projects and building high-performing models. As these steps can be incredibly tedious and laborious, the tools you use to get them done can make or break your project and team (from a productivity and morale perspective). That’s why we’re always hard at work trying to make your labeling and project management experience as efficient and straightforward as possible. Over the last month, we’ve introduced several significant workflow-enhancing improvements to continually streamline your experience, including sending objects to front/back via shortcuts, near real-time analytics, improved filtering, and more.

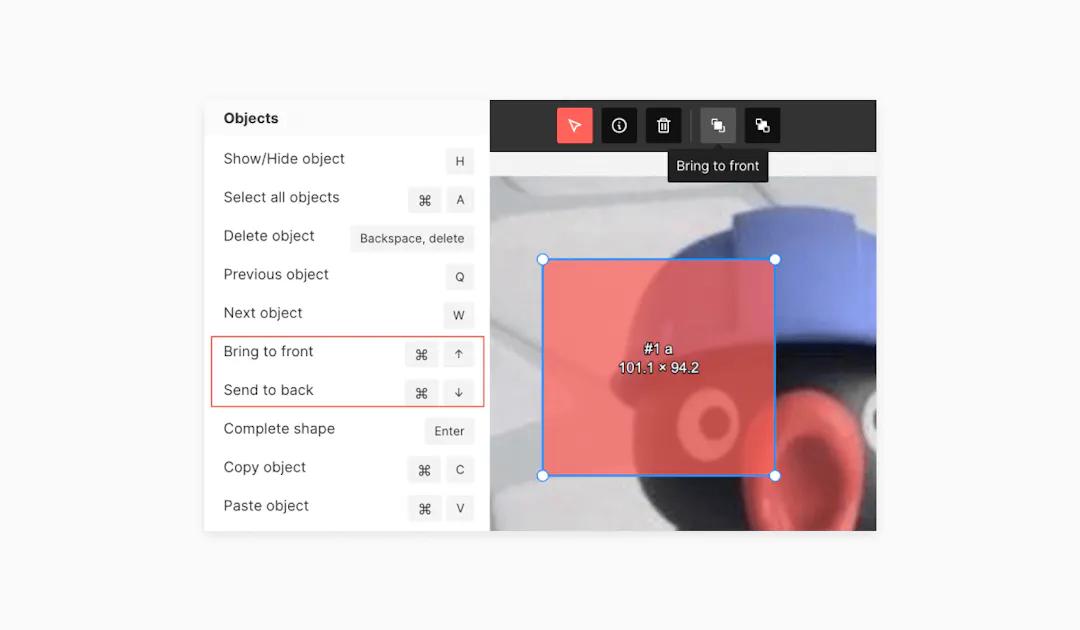

Bring objects to the front, so you never miss a pixel again

We’ve added the ability to bring objects to the front or back of other objects. This makes working with overlapping objects a breeze and helps ensure that pixels are never missed when annotating your data. Overall, this functionality makes more precise and accurate annotations possible and easy to achieve. Selected objects are temporarily treated as the foremost object to make immediate modifications easier.

This functionality is available via bring to front and send to back buttons in the top navbar, as well as nifty shortcuts.

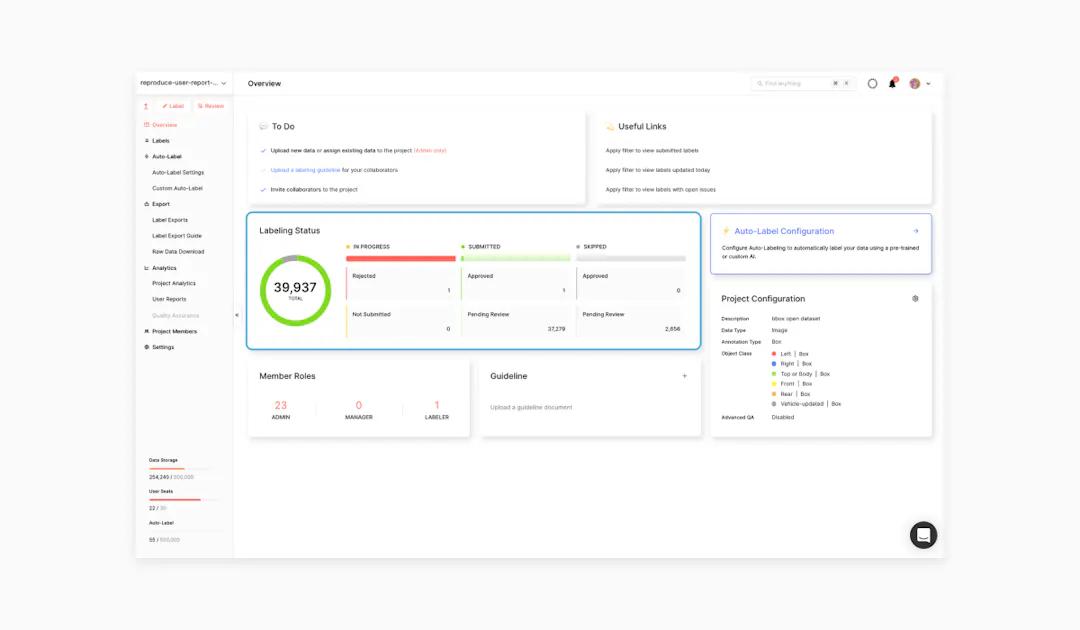

Keep your finger on the pulse of your projects with near real-time analytics

We’ve replaced our batch processing pipeline with a near real-time event-driven pipeline, so your project analytics page, including the labeling status and quality assurance tabs, now update in seconds instead of hours. This allows you to discover data insights faster, more quickly uncover and respond to any issues that may arise, and make decisions at the speed of your data - instead of post-facto. This also allows you to optimize labeling operations and agility since you can notice negative trends faster and nip them in the bud.

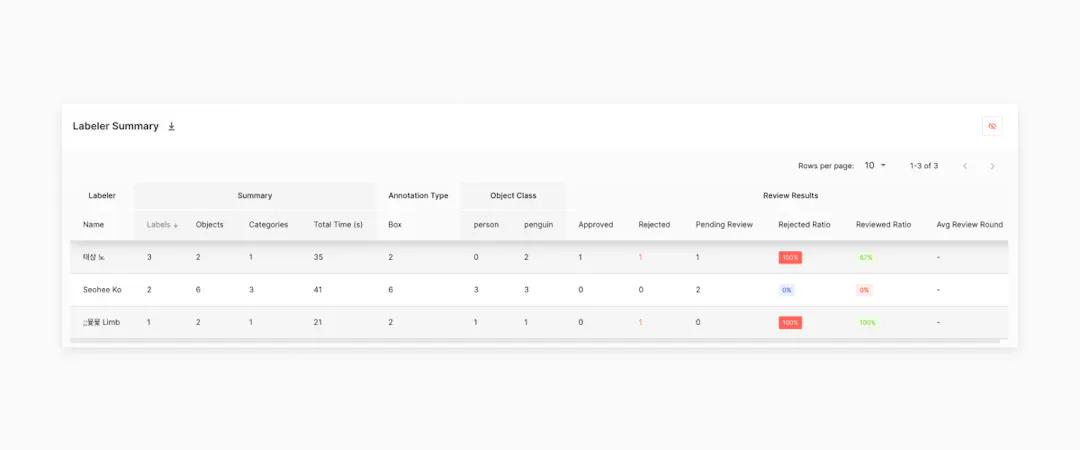

To make user reports more effective, we’ve made it easy to create and view the applied filter status and filter properties, along with the requested date, in the topmost dropdown menu. We’ve also added the ability to view rejected percent, reviewed percent, and avg review round on the Labeler Summary chart.

Finally, we’ve shortened the table row names on the Annotation Summary chart so you can see everything you need fast in one glance.

Filter and manage your data, issues, and exports more effectively

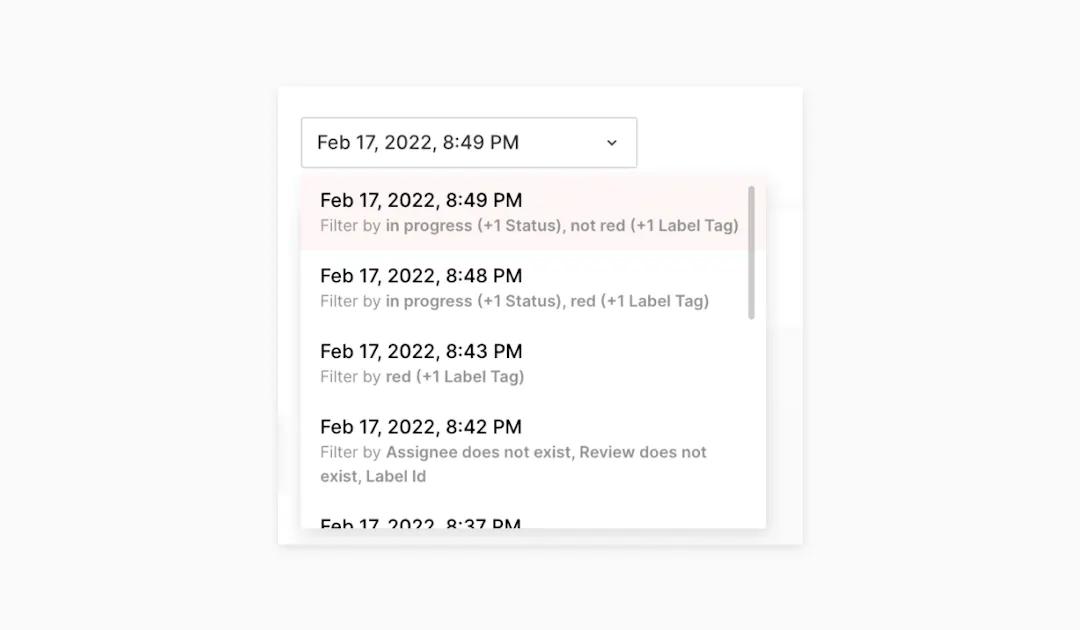

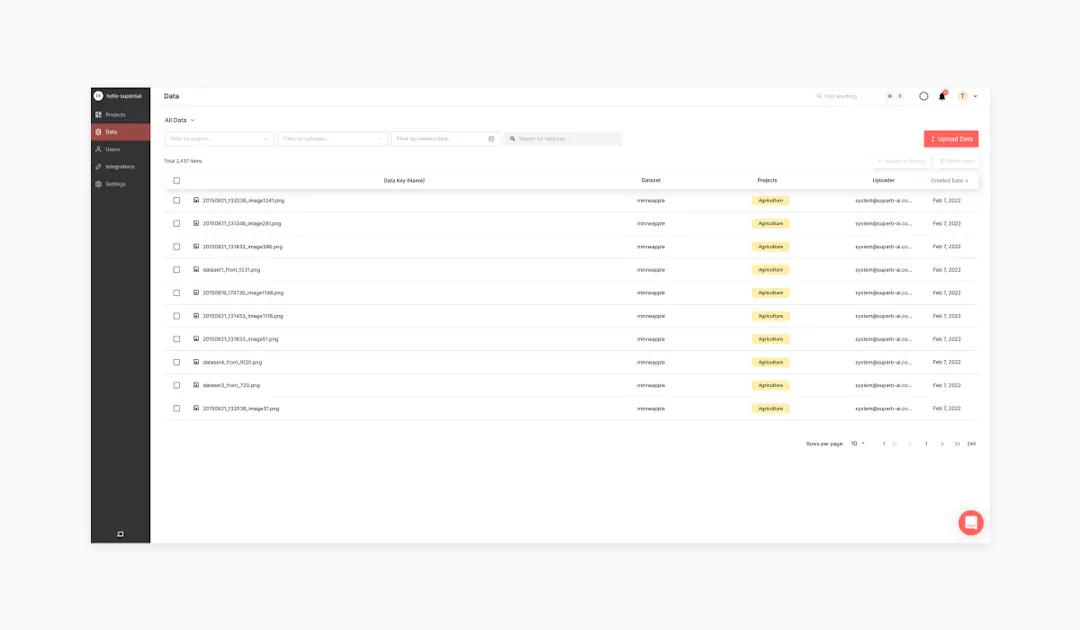

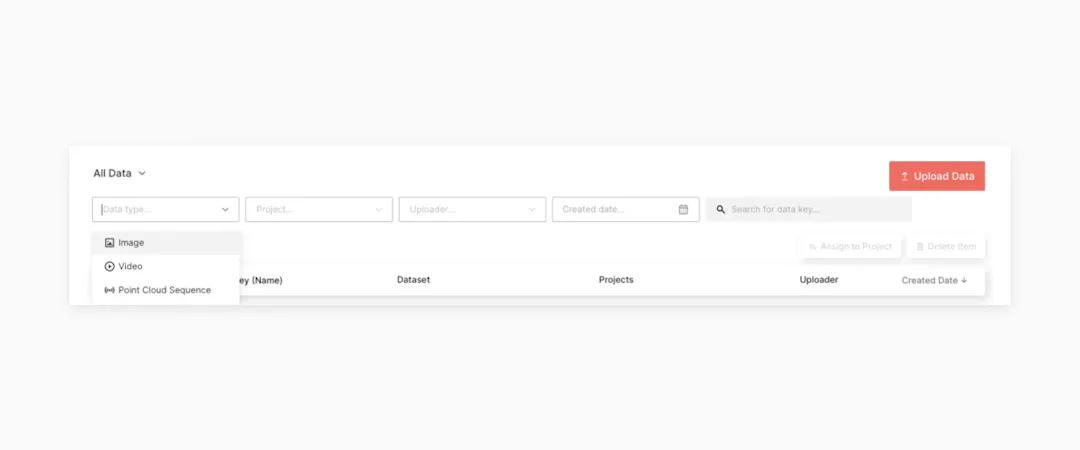

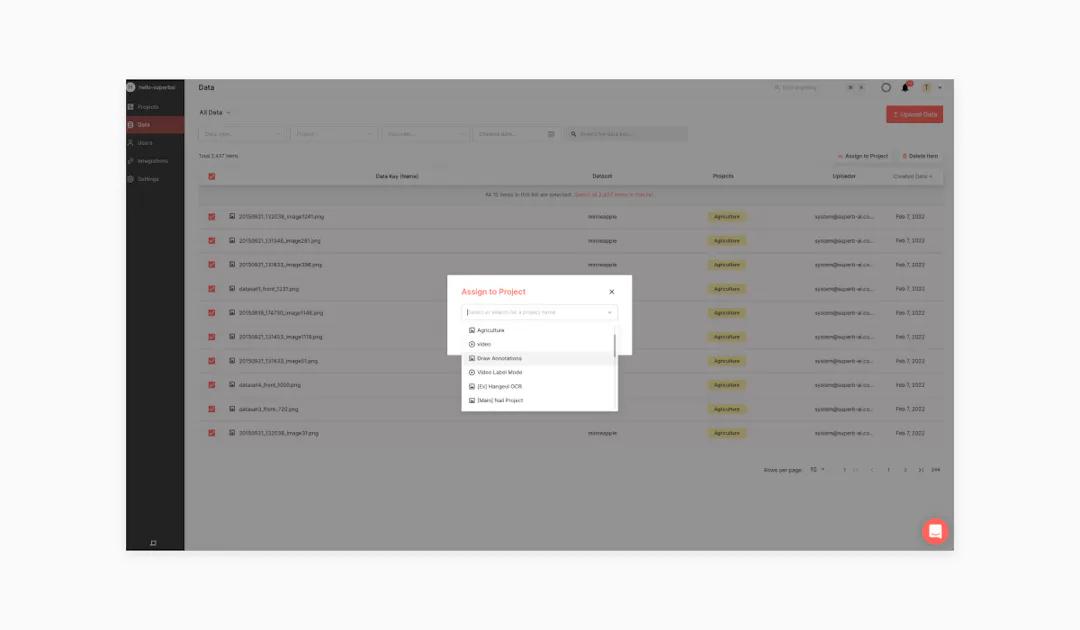

Next, we’ve reimagined how data is presented in the Suite that we think will have a big impact. To start, on the Data page, instead of showing a list of datasets, you’ll now see All Data by default, giving you a much broader view of the data available to you (but you can easily switch back to viewing the dataset list via a handy top dropdown menu if you prefer). We’ve also added more filters to find the exact data you need faster and easier, including by project, uploader, created date, or data type, with a dropdown menu for each. Similarly, for image sequences, you can now sort issue threads by number and last update and filter them by current frame issue or all issues, making it easy to quickly pinpoint and resolve problems your teams may find along the way.

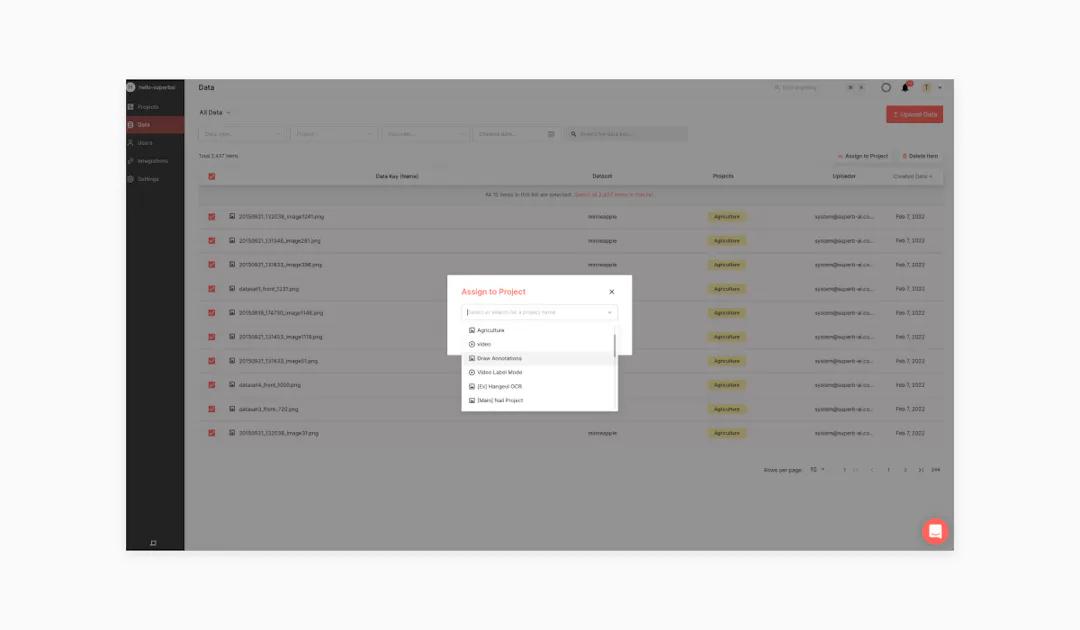

To make assigning data a little easier, the dropdown list when assigning data to a project.

In that same vein, we’ve made exports easier to work with through the card created within your export history whenever an export job is completed. Within a card, you can quickly view filters you’ve previously applied to the selected label list (i.e., all the labels contained within your export), as well as use additional filters, allowing you to drill down into your data to an even finer degree to create a new more-focused export.

Label on the edge

Labeling just got a little easier when working on the edge. Annotations will now be applied up to the image boundary when drawing annotations. When working with bounding boxes, the drawn box will only contain the area within the image boundary if you release dragging outside the boundary. And when working with polygon segmentation, the annotation will automatically be applied to the nearest image edge when you click outside the boundary.

Other improvements

Data Labeling Automation

You can request label export and create a CAL together with a single click. Also, object tracking for video annotation automation now supports a frequency filter to define the minimum number of frames an object must appear in to be detected.

Video Annotation

The image sequence timeline now includes:

• editing the selected object

• filtering the annotation object within the frame

• and deleting objects with multiple shapes at once

SDK/CLI

Image uploads of up to 20MB are now supported.

What’s next

We’re currently working on some really exciting stuff, including customizable shortcuts for the Command menu that makes working your way easier than ever before, which should be out soon. Plus, keep an eye out for some big announcements in the next couple of months related to LiDAR annotation (starting with 3D point clouds), DataOps (a better way to sift through, decipher, and curate your data), and much more! We’re also working hard to bring together an entire catalog of training and How-To videos to get you from start to success with the Superb AI suite, so be on the lookout for that.

Want to stay connected with everything going on in computer vision and MLOps? Check out The Ground Truth newsletter to get resources, insights, and news straight to your inbox - a complete sales-free zone.

Related Posts

Product

How to Build & Deploy an Industrial Defect Detection Model for a Lucid Vision Labs Camera

Sam Mardirosian

Solutions Engineer | 15 min read

Product

How to Build & Deploy a Safety & Security Monitoring AI Model for an RTSP CCTV Camera

Sam Mardirosian

Solutions Engineer | 15 min read

Product

How to Use Generative AI to Properly and Effectively Augment Datasets for Better Model Performance

Tyler McKean

Head of Customer Success | 10 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.