Insight

Active Learning 101: A Complete Guide to Higher Quality Data (Part 2)

Tyler McKean

Head of Customer Success | 2022/04/28 | 11 min read

Introduction

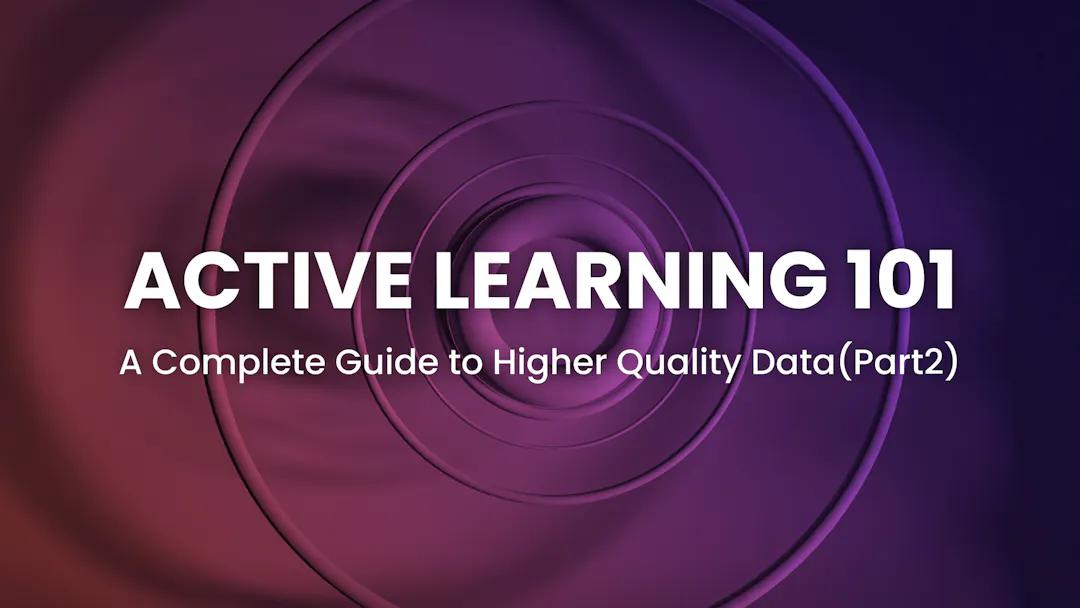

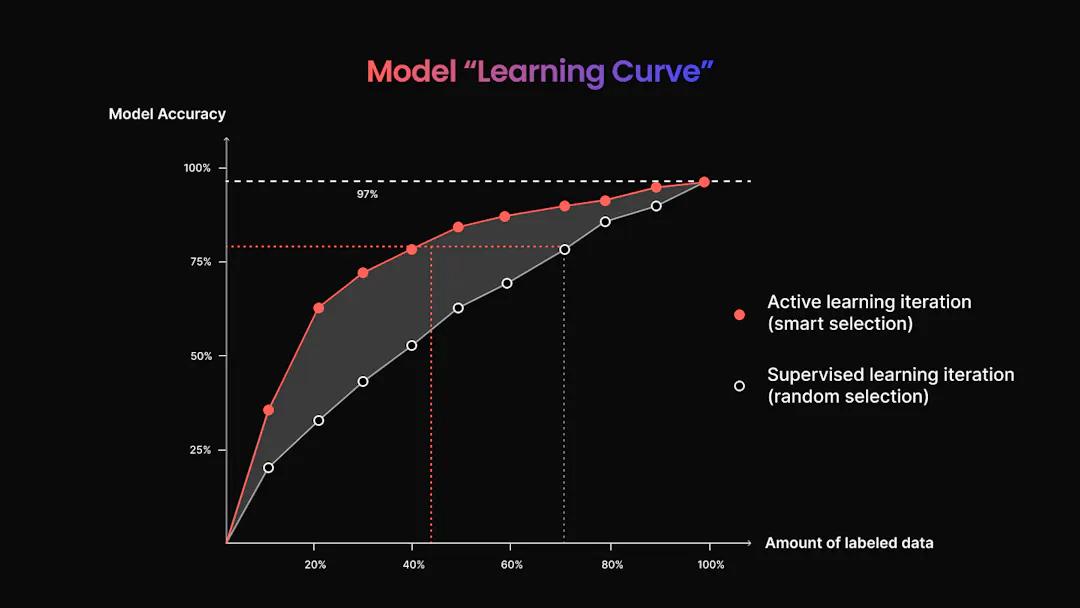

Building an active learning flow for your computer vision project reaps the benefits for those looking to lessen human-in-the-loop involvement and who want a faster, easier approach to building their model through supervised learning. Active learning promotes efficiency, cuts costs, and saves time by requiring less data than other approaches and emphasizing data quality over quantity. In Active Learning 101: A Complete Guide to Higher Quality Data (Part 1), we outlined the basics of Active Learning, how it’s different from other types of computer vision, and its popular subtypes. If you haven’t already, we suggest starting there first.

Diagram of the active learning workflow for computer vision tools.

A Quick Recap

Building a machine learning model can be cumbersome, with data labeling being the most time-consuming aspect of the entire process. However, data annotation is arguably one of the most important factors in building your model, and the strategy you take determines its outcome. Choosing the wrong methodology could squash your entire results. Finding the appropriate workflow for building out data annotations, thus choosing the correct model for your project, will largely determine its outcome. And knowing how much time this step alone takes presents a considerable hurdle for ML teams, especially those that are smaller and lack the resources to carry out robust operations. Active learning then alleviates the need for large data labeling teams and involves a more automated workflow.

Active learning is not a one-size-fits-all approach to model training. Depending on the data you’re working with and the end goal, a machine learning practitioner might want to choose one strategy over another. Random sampling, for example, grants each piece of data an equal likelihood of being chosen for labeling without any human influence. The purpose here is to avoid bias so that the results of the model are not skewed one way or another. In other instances, we can adapt active learning workflows to a lack of data samples in membership query synthesis. Here, we use basic editing tools to create new data samples. Need an image sample that’s close up? Use the cropping tool. Or maybe your data lacks images in a sunny setting; use the brightening tool. We also discuss pool-based sampling, which uses the labels with the lowest accuracy scores to tweak your model. And in stream-based selective sampling, your model uses its confidence threshold to dictate which pieces of data should be labeled. Knowing the basics of active learning and the different approaches is paramount in the project planning phase. In addition, understanding important measurements, such as accuracy, precision, and recall – and knowing how and when your data’s performance drops off are paramount.

Active learning is best used in models trained to complete predictable, repeatable tasks and does not work as well for those who learn as they go along. In a phenomenon known as reinforcement learning, your model modifies its behavior based on the mistakes it makes, i.e., a robot learning to walk will almost certainly fall before perfecting this action. Active learning is best avoided when these types of patterns are desired. And although the cost savings and time reduction in active learning models make it a desirable training method for many ML teams, the technology isn’t necessarily there. Adapting a company’s current software to be compliant with active learning protocols is often more troublesome than it’s worth, so they may opt-out of this route.

The Superb AI Active Learning Toolkit

In traditional machine learning, data labeling is a highly manual process carried out by human annotators, which are then responsible for querying the effectiveness of a model by hand or with limited tools. On the other hand, active learning combats this bottleneck by implementing an algorithmic approach designed to proactively identify subsets of training data that most efficiently improve performance. Superb AI leverages active learning to identify gaps in the training data. Rather than weighing each piece of data equally, active learning works to find the most valuable data that will lead to higher accuracy and efficiency.

Uncertainty Estimation

Developing a robust active learning toolkit relies heavily on an auto-label AI to accurately annotate data outside of your manually labeled ground truth set. When approaching automation, one must look at its ability to assess an image fairly and apply a precise label; otherwise, this tool is rendered useless. Various measurement techniques can be applied to examine a label’s validity, but not all yield the most promising results. Least confidence, for example, is a technique in which your model selects the data it is least optimistic about to be labeled. It’s highly popular and performs well for many teams, but a common problem is its tendency to predict a high confidence level in error when the model has been overfitted to the training data. Alternatively, examining the uncertainty levels of your data provides a statistical measurement of trust. In essence, how uncertain your model is of a piece of data can tell an ML professional where improvement needs to be made.

Though automating the labeling process is a huge win in machine learning, it poses the question of accuracy. If a machine annotates the remaining data, how do we know it can be trusted? Superb AI’s core active learning technology is rooted in the concept of uncertainty through its patented Uncertainty Estimation Tool, which is applied to auto-labeled datasets within each model. The Uncertainty Estimation tool works as an entropy-based querying strategy that selects instances within the training set based on the highest entropy levels in their predicted label distribution, using our underlying uncertainty sampling. This strategy uses a patented hybrid approach of both Monte Carlo and uncertainty distribution modeling methods.

Entropy: What it is and How it Fuels Active Learning 🚀

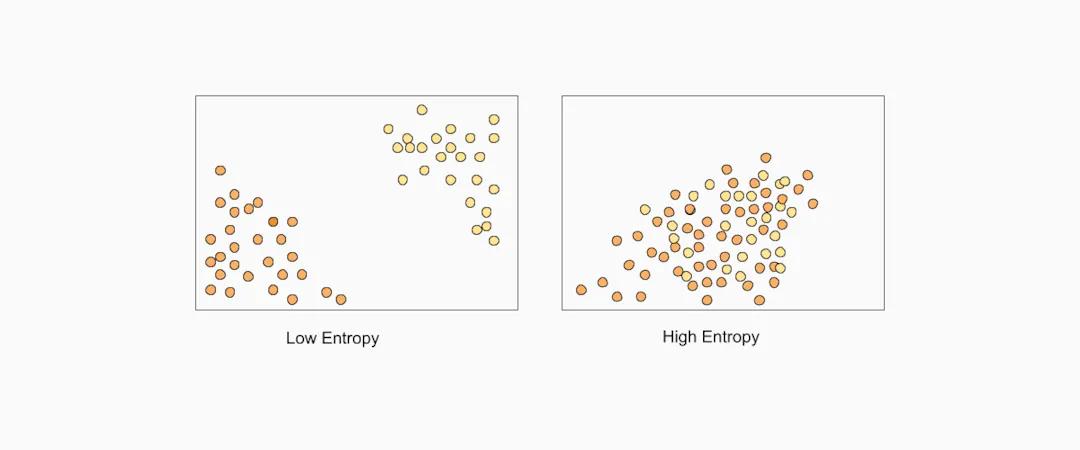

Depiction of low vs. high entropy fueling computer vision tools and technology.

To build an effective machine learning model, data must be diverse and represent highly possible, yet different, outcomes. We measure these dissimilarities using something known as entropy, which is used to identify pieces of data that may cause our model the most trouble or confusion. Highlighting differences and encouraging diverse examples in an active learning model leads to better results and faster adoption of the desired behavior.

We use the principle of uncertainty sampling to highlight which pieces of data to use for querying. In essence, our uncertainty estimation and active learning feature set aims to identify labeled data that the model has determined to have the highest output variance in its prediction. High output variance refers to the fluctuating predictions a model may make based on its data. As part of the training process, it is essential to minimize these variances or differences and tighten the model’s predictions through a querying strategy known as variance reduction. Without eliminating high instances of variances, our final model will fail to make consistent and actionable predictions upon implementation.

We apply these principles in the Superb AI Suite using our Uncertainty Estimation tool. With each iteration of CAL, a difficulty score of easy, medium, or hard is administered to calculate its information usefulness. An image that Custom Auto-Label found more challenging to annotate accurately will receive a higher difficulty score than its easily identifiable counterparts, rendering its predictions more uncertain. Images that present a greater obstacle for our auto-label technology to identify also pose the highest value by showcasing which types of examples can lead to better performance. On the contrary, images labeled as more difficult also strongly correlate with being incorrect. ML professionals can then focus on these individual labels and correct them, thus improving model performance.

Monte-Carlo Sampling and Dropout

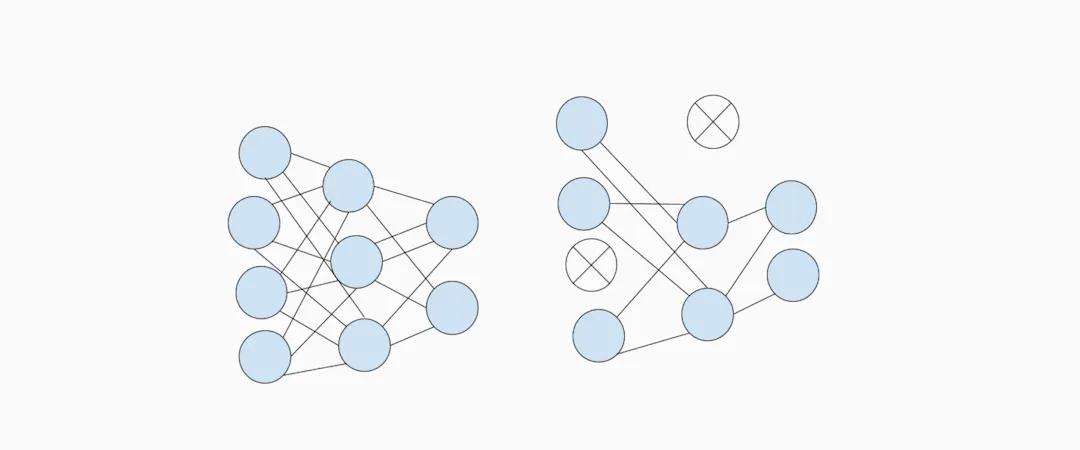

A full network of training data sit on the left, while the right shows data points that have been dropped out

A full network of training data sit on the left, while the right shows data points that have been dropped out (Source)

The Superb AI active learning tool kit relies heavily on uncertainty estimation and difficulty scores to enhance individual ML models. This is done through a method known as Monte-Carlo sampling, which aims to establish multiple model outputs for each data input through random selection. After each iteration, uncertainty levels are then calculated. But how do we continuously input the same data samples to paint a better picture of uncertainty? ML engineers will often implement the use of dropout layers. To do this, one must theoretically “switch” different pieces of data on and off for our model to evaluate. With a less predictable data input, our model becomes more sophisticated in making predictions. With Superb AI, your team can filter different data tags, such as your ground truth and validation sets, in multiple combinations to display different levels of uncertainty. Then, using these pieces of data with higher uncertainty, you can once again retrain your model to yield higher performance and accuracy.

A Closer Look at a Real Application

As stated, the philosophy and purpose behind active learning are to use smaller yet more valuable subsets of training data to increase efficiency and performance in your model. This translates to less time spent labeling by your team as your model identifies the most valuable information resulting in higher accuracy. Let’s look at a real-world example of how we can leverage active learning in the Superb AI Suite to identify manufacturing defects in a steel pipe dataset accurately.

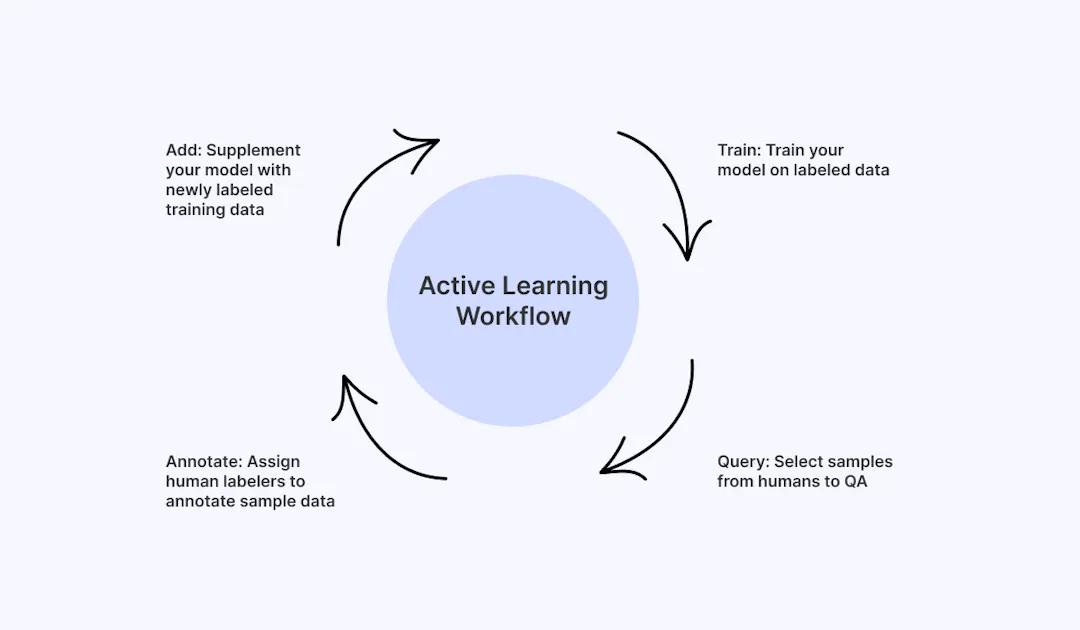

1. Set up Your Project and set Your Objectives

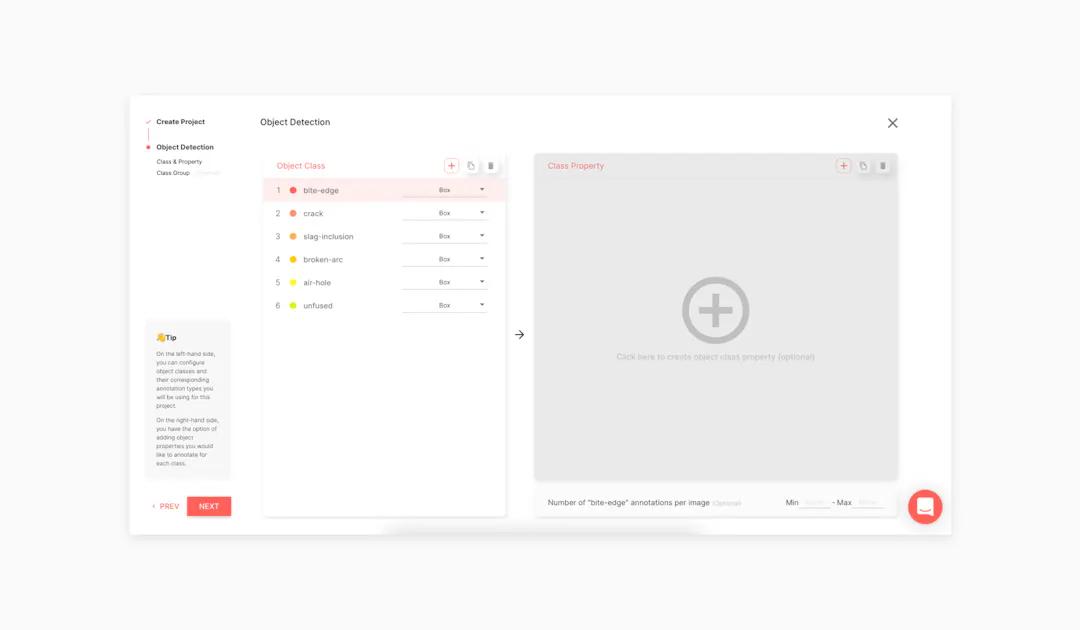

Setting you project on Superb AI's computer vision platform

Our goal with this project is to use active learning principles to build an accurate Custom Auto-Label with a limited sample size of only 774 images. We’ll begin by selecting bounding boxes as our annotation type and defining object classes and their properties. Since the purpose of this model is to identify surface-level defects, we’ll divide our object classes as such: bite-edge, crack, slag-inclusion, broken-arc, air-hole, and unfused. You also can group classes, which is helpful if you’re working with dozens or even hundreds of them.

2. Establish Your Image Sets

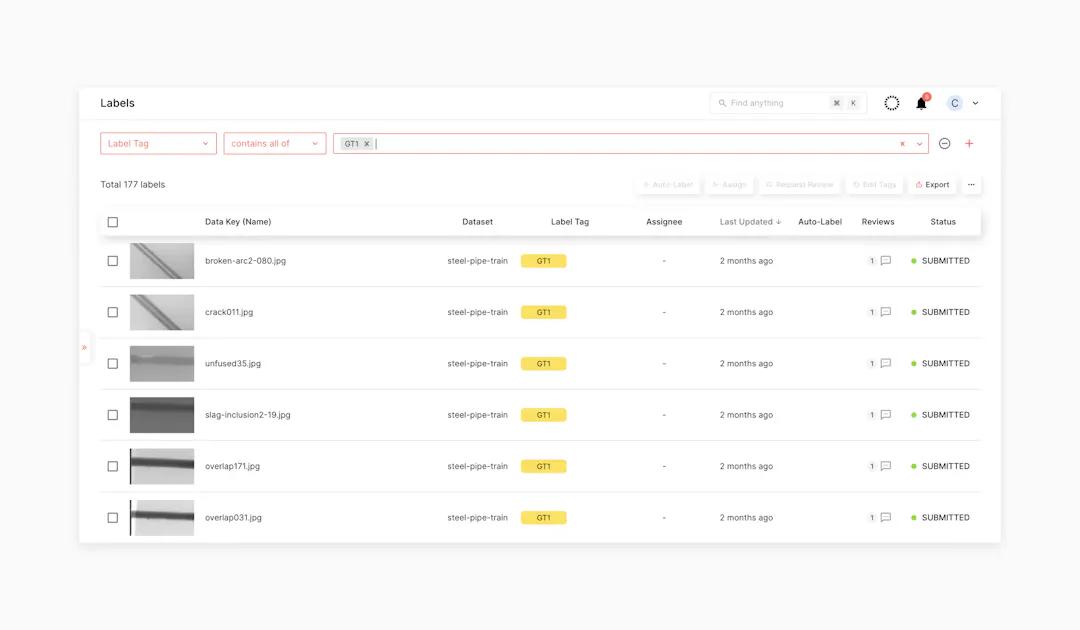

Use tags in Superb AI Suite to separate each image set and categorize them accordingly.

The first step in our experiment is to use random sampling to establish our validation and ground truth sets. Here, we can use tags to separate each image set and categorize them accordingly. In this instance, our validation set comprises 178 images, acting as a gold standard to which to compare our data against throughout each iteration. Validation sets, such as this one, allow us to compare accuracy and efficiency gains over time and highlight potential biases in our training data. An important thing to remember when conducting an experiment in the Superb AI Suite is to meticulously tag each dataset accordingly and assign it a group name. This eliminates room for error and helps to separate each iteration. We’ll call this set VAL 1. Next, we must randomly assign labels to make up our initial ground truth set. We can do this by selecting 177 images to be labeled and calling it GT1. Our subject matter experts will manually label each of these sets within the Superb AI platform. It’s worth noting that using experts in this field at the start of our projects lessens, or eliminates, the need for them later on, thus reducing cost.

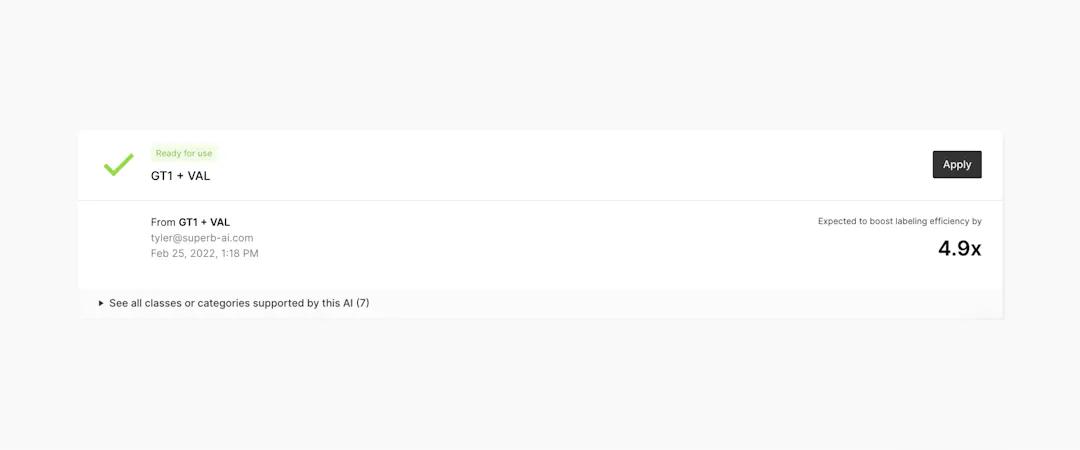

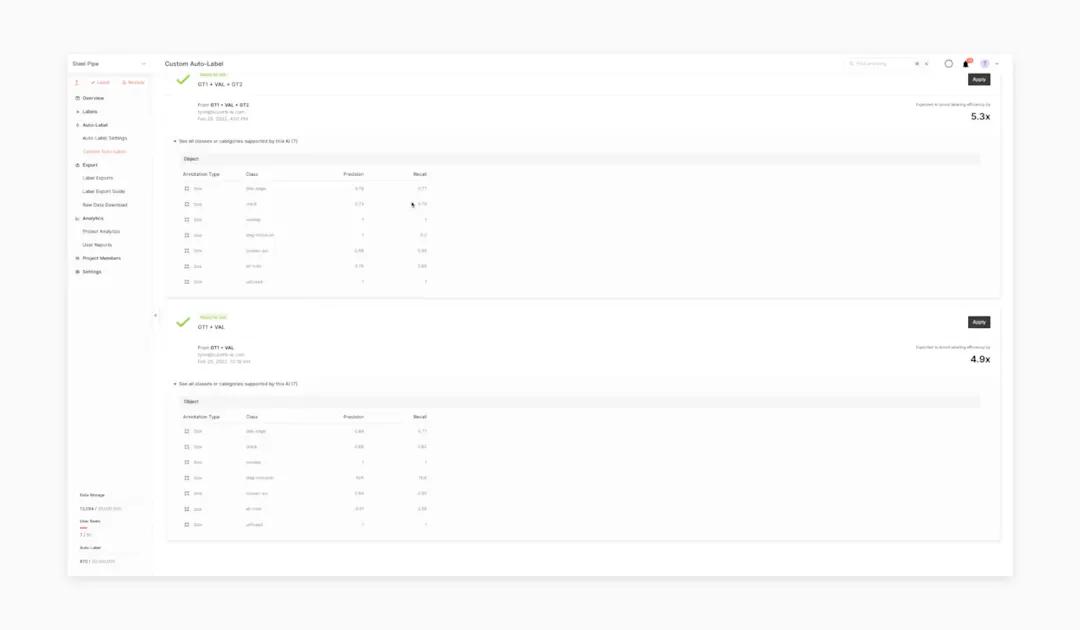

3. Train Your First Iteration

Superb AI Custom Auto Label

After the initial step of manually labeling our first ground truth and validation sets, we can use the export and train functions to train our first Custom Auto Label using these image sets. Carefully title your first CAL to avoid confusing this model with future iterations; we’ll keep it simple and call this CAL1. From here, we can click “Create Custom Auto-Label” and watch as our first CAL is formed. Once the first iteration of your model is trained, you will see which classes performed well under CAL and which ones still need improvement. The measurements we’re looking for are both precision and recall. In this instance, broken-arc and bite-edge performed very well for a first iteration, while other classes like crack did not; slag inclusion, for example, was not distributed appropriately in the training and validation sets and therefore could not be measured. This first reference measurement lays the groundwork for how well your custom auto-label model performs and will continue to perform, as well as what your validation split looks like for future use. An important metric to note for your team is the Expected Labeling Efficiency Boost at the top, which displays how much time your team is expected to save using CAL versus annotating manually. As of this step in the project, CAL annotated the validation set correctly 4.9x faster than if it was done manually. This metric will only improve over time. From here, we can apply this first iteration of CAL to our model.

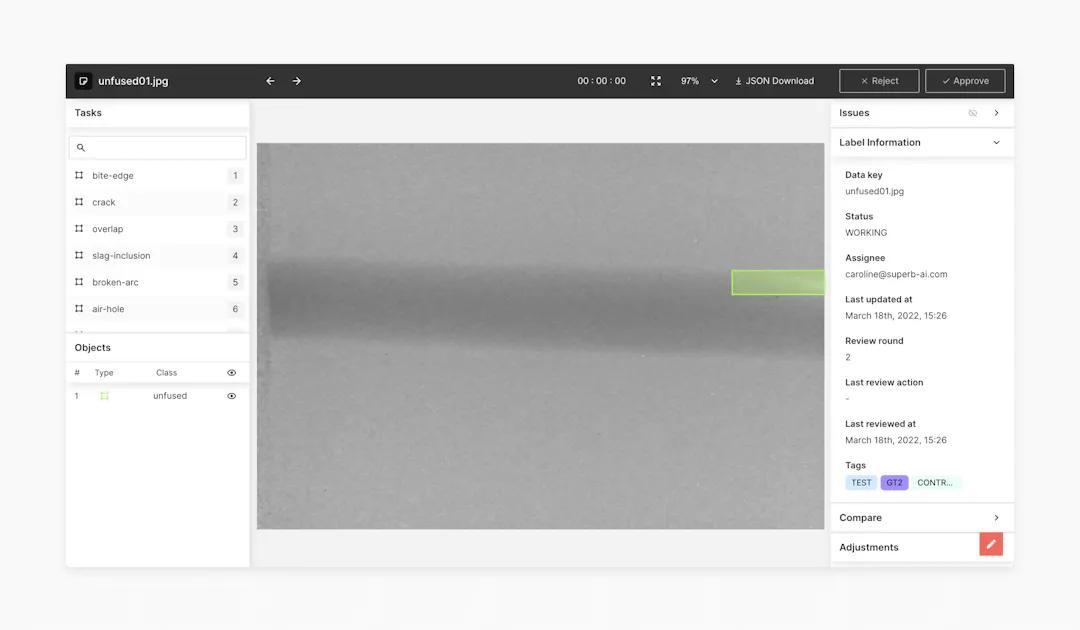

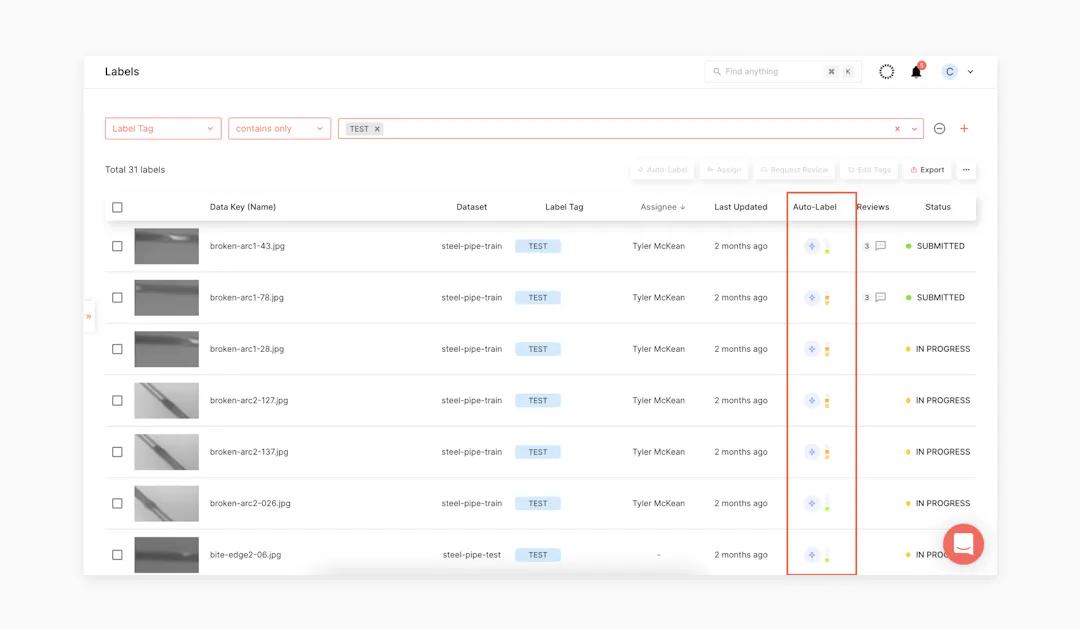

4. Apply Your Custom Auto-Label to Your Test Set

Apply your newly created model to your project settings and enable the auto-label feature.

After a Custom Auto-Label is trained and deemed appropriate for use, you can apply your newly created model to your project settings and enable the auto-label feature. Once the model is configured, all you have to do is select the data within your label list and click the auto-label button to kick off the task. To do this, we’ll need to use our filtering tool and isolate the data partitioned in the test group and exclude all data that is part of GT1 and the validation set. Then, we can request that these remaining images be auto-labeled. Once auto-label is applied to all of the images in the test set, you’ll see that the images that were once unannotated now contain labels generated by CAL.

5. Leverage Active Learning and Uncertainty Estimation

Superb AI Uncertainty Estimation tool automatically assigns a difficulty score to each image

Once auto-label has been applied to your dataset, the Superb AI Uncertainty Estimation tool automatically assigns a difficulty score to each image, indicating which labels need further querying. When building your model, images defined as easy are necessary to establish familiarity, but as previously stated, they do nothing to improve your model. On the other hand, images classified as either hard or medium help showcase where the model needs improvement and which examples can lead to better performance. Using our filters to isolate these harder examples allows subject matter specialists to correct each image and incorporate them in the following training iteration. In the Superb AI Suite, difficulty scoring is displayed in the auto-label column by having one, two, or three bars of green, yellow, and red.

6. Define Your Ground Truth 2 Subset

Define Your Ground Truth 2 Subset

For the next step in this experiment, we utilize difficulty scoring produced by uncertainty estimation to define a new subset that is most likely to contain the highest degree of information usefulness for our model. We can determine this dataset by isolating 251 examples, all classified as medium and hard, as well as images that our auto-label AI failed to annotate. Using our tags, we can then categorize these images as GT2 before having your team annotate each one before training. Once trained, we can then add it to our training set and use Superb AI to train the second iteration of our Custom Auto-Label model, this time with our original ground truth, GT1, validation, and our newly identified GT2 subset. As in the last iteration, we can see how well our Custom Auto-Label performed and compare the two results. This time, with our second CAL, efficiency improved even more, measuring an efficiency boost of 5.3x compared to the 4.9x of our last iteration. Because we expanded our ground truth size and applied a pool-based sampling active learning method to GT2 to isolate harder labels via our Uncertainty Estimation Tool, we were able to yield better results than our previous Custom Auto-Label.

The Active Learning Advantage

The above experiment showcases how using active learning increases the efficiency and accuracy of your project over time. But how does it compare to traditional methods, and how can we display this in our model? Taking this experiment a step further, we can unbiasedly create an additional subset of training data using random sampling. To carry this out, we need first to form a control group that is the same size as our GT2 dataset identified by active learning and then retrain a custom auto-label. The difference here is that the data applied to the control group will be randomly selected rather than strategically assigned. We’ll repeat the same steps above, labeling our CAL as Control to easily separate it from past iterations and then train the model. Once applied, Superb AI can calculate the efficacy of this model’s iteration.

Superb AI can calculate the efficacy of a model’s iteration.

You’ll find that the results from our control group show improvement over our initial iteration of custom auto-label, with increases in precision and recall and an efficiency boost score of 5.1x. This is to be expected, as our initial dataset only utilized our ground truth labels. However, the difference is even more significant when compared to our CAL 2 model using active learning. Examining these results, you’ll see that precision and recall improved in the majority of classes. From these findings, we can conclude that an active learning workflow not only enhances, but also accelerates model training and accuracy.

The Takeaway

Implementing active learning into your ML workflow is a sure-fire way to expedite and improve the model training process over traditional methods. Approaching your data intelligently and leveraging the most valuable examples to train your model leads to faster and more quantifiable improvements. The Superb AI Suite demonstrates how active learning and custom auto-label can be a superpower for your ML team and save you time and money. Because of how easy it is to edit and retrain your model, the Superb AI active learning toolkit is well-equipped to adapt to your business needs as they become greater and more complex. Stay tuned for our Active Learning 101: Part 3 to learn more about best practices, customer success stories, and what’s next for Superb AI.

Related Posts

Insight

⑪ Germany's Physical AI Moment: Siemens, BMW, and the Robot Unicorn Counteroffensive

Hyun Kim

Co-Founder & CEO | 15 min read

Insight

⑩ Big Tech Physical AI Trends (2): Tesla vs. Amazon Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 10 min read

Insight

⑨ Big Tech Physical AI Trends (1): NVIDIA vs. Google Strategy Breakdown

Hyun Kim

Co-Founder & CEO | 7 min read

About Superb AI

Superb AI is an enterprise-level training data platform that is reinventing the way ML teams manage and deliver training data within organizations. Launched in 2018, the Superb AI Suite provides a unique blend of automation, collaboration and plug-and-play modularity, helping teams drastically reduce the time it takes to prepare high quality training datasets. If you want to experience the transformation, sign up for free today.